I’ve been thinking lately about two subjects that may not seem, on the surface, to have much to do with one another: AI and space travel.

One of them endlessly unfolds on our computer screens, in our workplaces, in schools, and across creative life. The other recently played out hundreds of thousands of miles from Earth, as NASA’s Artemis II crew traveled around the moon. One story is about machines beginning to imitate more of what humans do. The other is about humans leaving Earth, looking back, and seeing home differently.

And yet both stories seem to lead back to a similar question revolving around what it means to be human.

That may sound like a large claim for what is, in one case, a technology story and, in the other, a space story. But after watching the reaction to Artemis II, I don’t think it’s much of a stretch.

More than a moon mission

Artemis II was about engineering, navigation, systems testing, and science, as such missions always are. But it also stirred up something less technical and harder to measure. People didn’t respond only with admiration for the achievement. They responded with awe. They talked about beauty, perspective, humility, and wonder. The astronauts themselves seemed to be grappling with some of the same feelings.

That response isn’t hard to understand. Apollo 17, the last crewed mission to the moon, flew in 1972. More than half a century has passed since then. For most people alive today, moon missions aren't something they remember, they're history – and they might as well be ancient history.

Speaking of the ancients, Artemis II was named for Apollo’s twin sister in Greek mythology. While that naming exercise explicitly tied it to the earlier era of moonshots, this didn’t simply feel like a continuation of a familiar story. For many, it felt like the return of something they had never really experienced for themselves.

I believe a big part of what was captured by Artemis II was perspective.

When astronauts travel far enough from Earth and look back, the scale of ordinary life shifts. The planet looks much smaller, borders appear entirely arbitrary, and many of the things we organize our lives around start to seem less solid than we imagined. Earth, seen from that distance, doesn’t look like an endless backdrop for human conflict and ambition. It looks fragile, finite, and astonishingly alone.

There’s even a term for that shift in perception: the overview effect. It's a phrase coined by writer Frank White, who has spent years documenting how more than 40 astronauts have described the experience of seeing Earth from space. As an NPR story recounts, White describes the overview effect as "an emotional or mental reaction strong enough to disrupt that person's previous assumptions about humanity, Earth, and/or the cosmos. Everyone's overview effect is unique to them, but there are reactions that are more common than others."

In fact, William Shatner – most famously known as Star Trek's Captain Kirk – experienced "profound grief" when he orbited Earth aboard the Blue Origin capsule in 2021. "I was crying," Shatner told NPR. "I didn't know what I was crying about. I had to go off some place and sit down and think, what's the matter with me? And I realized I was in grief."

The AI unsettlement

So, where does artificial intelligence enter the picture?

AI and space travel are not the same kind of story. In fact, they unsettle us in very different ways. Space travel confronts us with scale, while AI confronts us with resemblance. Space travel tends to remind us how small we are, while AI has a way of making us ask what, exactly, makes us special.

That’s where the connection begins, at least for me.

The vastness of space can be humbling in an obvious way. It reminds us that human beings aren’t the center of the universe, and never were. Whatever importance we assign ourselves in daily life, the cosmos isn’t arranged around us. For some people, that realization is disorienting. For others, it’s oddly calming. Either way, it tends to cut the ego down to size.

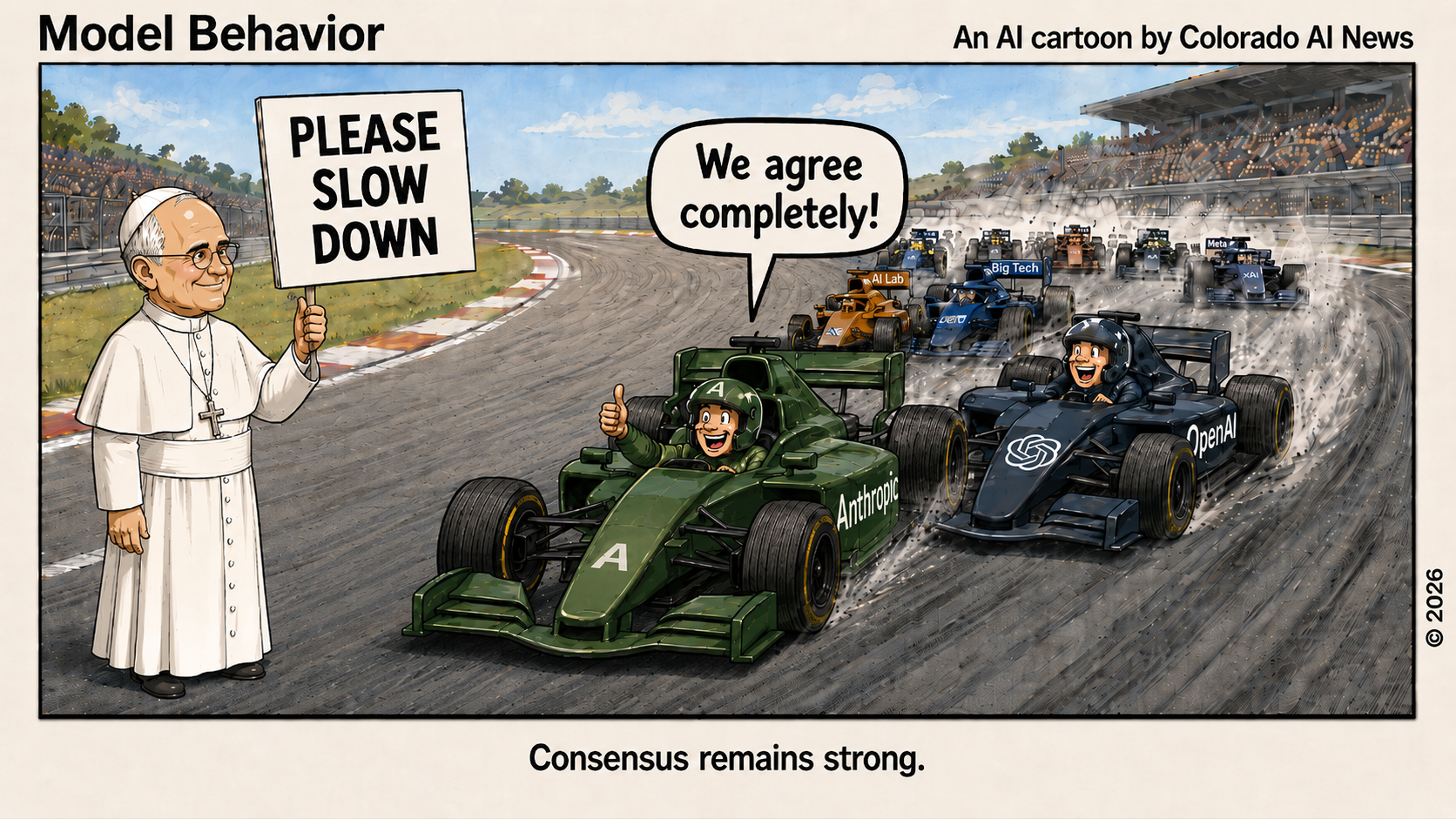

AI is unsettling in a different way. It doesn’t make us feel cosmically small, but it makes us feel less secure about some of the capacities we once assumed were distinctly ours. When machines can produce fluent language, generate images, summarize arguments, mimic conversation, and handle more and more tasks that once seemed to require human intelligence, the question hits harder than it used to: What is it, exactly, that makes us human?

That’s not a new philosophical question, as we have been asking versions of this for a very long time. But AI has made it newly immediate, pulling the question out of the seminar room and bringing it into everyday life.

If a machine can do more and more of what we once thought of as ‘our’ work, then what is left that is distinctly ours?

Intelligence? Creativity? Judgment? Moral reasoning? Consciousness? Wisdom? Are those all the same thing, or have we sometimes bundled them together too casually?

I don’t think AI has answered these questions. If anything, it has exposed how incomplete some of our earlier answers were.

Beyond science and engineering

For a long time, it was easy to assume that human beings were defined, in large part, by intelligence, language, inventiveness, and creativity. Those things still matter enormously.

But AI has complicated the picture. It has shown that some forms of language and creativity, or at least some approximations of them, may not be as exclusively human as we once assumed. That doesn’t make human beings less valuable, but it does mean we need to think more carefully about what, in fact, we value most.

This is where both artificial intelligence and space travel begin to move beyond science and engineering, as important as those fields are. Deep dives into AI and space quickly draw us into older territories: philosophy, religion, ethics, literature, and history. The biggest questions they raise can’t be answered by technical definitions alone.

A rocket can be explained in great detail, but that doesn’t fully account for what happens when someone sees Earth from deep space and feels changed by it. An AI model can be described in terms of training data, compute, and probabilities, but that doesn’t settle what consciousness is, or whether prediction is the same as understanding, or what kind of responsibility humans bear when they build tools whose capabilities continue to grow.

In that sense, both AI and space travel push us past the comfort of technical language and into more difficult terrain. That may be one reason they both fascinate and unsettle people. They aren’t just changing what we can do. They challenge how we understand ourselves.

Human beings have been looking upward and asking large questions for eons. Long before rockets, moonshots, and NASA, the night sky was already shaping what we thought about and how we thought about ourselves. In that sense, Artemis II didn’t invent this response; it simply reawakened it.

What still remains

AI, though obviously different from space exploration, may be reviving a similar kind of reflection. Not by lifting our eyes upward, but by turning them inward. Is judgment merely the ability to produce a useful answer, or does it require experience, values, and accountability? If machines can imitate more of what we do, does that narrow our humanity, or does it force us to define it more honestly?

That last possibility interests me the most.

Perhaps being human was never best defined by being the biggest mind in the room, or the fastest processor, or the unquestioned center of the story. Perhaps those were always somewhat flattering ways of describing ourselves. Perhaps what matters more to being human has to do with consciousness, responsibility, self-awareness, moral choice, and the capacity to make meaning, especially when no tidy answer presents itself.

That doesn't make AI or space travel less important. It makes them important in a different way. They become not just stories of capability, but stories of self-understanding.

Seen in that light, Artemis II was more than a successful moon mission, and AI is more than a business story, a productivity story, or even an innovation story. Each, in its own way, asks us to pause and approach these developments with a little humility, reminding us that technological progress doesn’t make the oldest human questions disappear. If anything, it can bring them into sharper focus.

What does it mean to be human in a universe that is vast beyond comprehension? What does it mean to be human in a world where machines can increasingly imitate aspects of human intelligence and creativity? Those are different questions, but only up to a point. Both ask us to think more carefully about what is essential and what is merely flattering. Both ask whether we’ve confused power with wisdom, output with understanding, or capability with meaning.

Do you find that conclusion bleak? If anything, I find it clarifying.

What remains, after the scale of the cosmos has taken us down a peg and after AI has complicated some of our assumptions about intelligence and creativity, is still quite a lot.

What remains is the species that can look back at Earth from near the moon and feel awe. What remains is the species that can build extraordinary tools and still ask whether those tools are being used wisely. What remains is the species that must judge, care, protect, create meaning, and live with the consequences of what it creates.

That seems to me no small thing.

Perhaps that’s the deepest link between AI and space travel. They don’t simply expand human capability. They also test human self-understanding. They remind us that progress, impressive as it is, doesn’t answer the oldest questions for us. It simply forces us to keep asking those questions.

In the end, maybe the best guidance is also the simplest: Be human.