At a time when humanity is building something it acknowledges that it may not be able to control – or even slow down – members of the RMAIIG (Rocky Mountain AI Interest Group) community were invited to an advance screening of a movie that holds up the mirror, "The AI Doc: Or How I Became an Apocaloptimist."

This is the film our society needs RIGHT NOW. Sundance gave us a gift: a film that holds the AI duality of the moment without flinching. It’s a MUST-SEE when it is released on March 27th.

We start with the builders: Fei-Fei Li, who taught machines to see, Geoffrey Hinton, the godfather who now grieves what he made — came to this work with the tenderness of parents. The early researchers weren't warlords. They were curious. Maternal, even. In fact Hinton suggests our only way out of our present AI quagmire is to train our models to be maternal. To us. The humans who will become as inconsequential to superintelligence as ants.

Now we have Dario Amodei, the sweet, nerdy kid who genuinely believes we'll be fine (and frankly if we're not, there's nothing we can do about it, so why worry) flanked by his protective little sister Daniela. Meanwhile, somewhere in the room, a parent asks: will my kid even make it to high school or will superintelligence cause an abrupt extinction event?

The film's co-director Daniel Roher is expecting his first child. He gave that feeling a name: Anxiety Mountain. The place you live when you realize that your life's work and your child are entering the same terrifying world at the same time — and you can protect neither. For artists and technologists, that's existential. For parents, it's visceral. A 3:00 am terror. Your heart, suddenly outside your body, utterly unprotected, in a world that gets harder to explain every single day.

The producers of this film are the same ones who gave us "Everything Everywhere All at Once" – a film about infinite chaos, overwhelming possibility, and the stubborn insistence that human connection is what makes any of it mean anything.

The irony is almost unbearable; the producers have already told this story. Artistically, it's stunning. And now Daniel Roher is living it, and existential dread is not a metaphor. Sam Altman, who was interviewed, is standing on the same peak, baby any day now, choosing his words with a new kind of care. You can hear the weight landing differently when the future stops being abstract. When Altman says the word “pressure” – it actually feels heavy.

I think about it with my kids. Every. Single. Day.

Conspicuously absent? Zuckerberg and Musk. The men who built empires on the premise of connection – and delivered division. They didn't come. Maybe the questions were too human.

There's a word nobody in power wants to say out loud: obligation. What do we owe each other? What do we owe the children who will inherit whatever we build or fail to build here? What is our duty as technologists? The researchers who started this work understood it intuitively – it's why Hinton left, and it's why so many early builders have become reluctant prophets. But somewhere between the lab and the launch, obligation got rebranded as competitive liability.

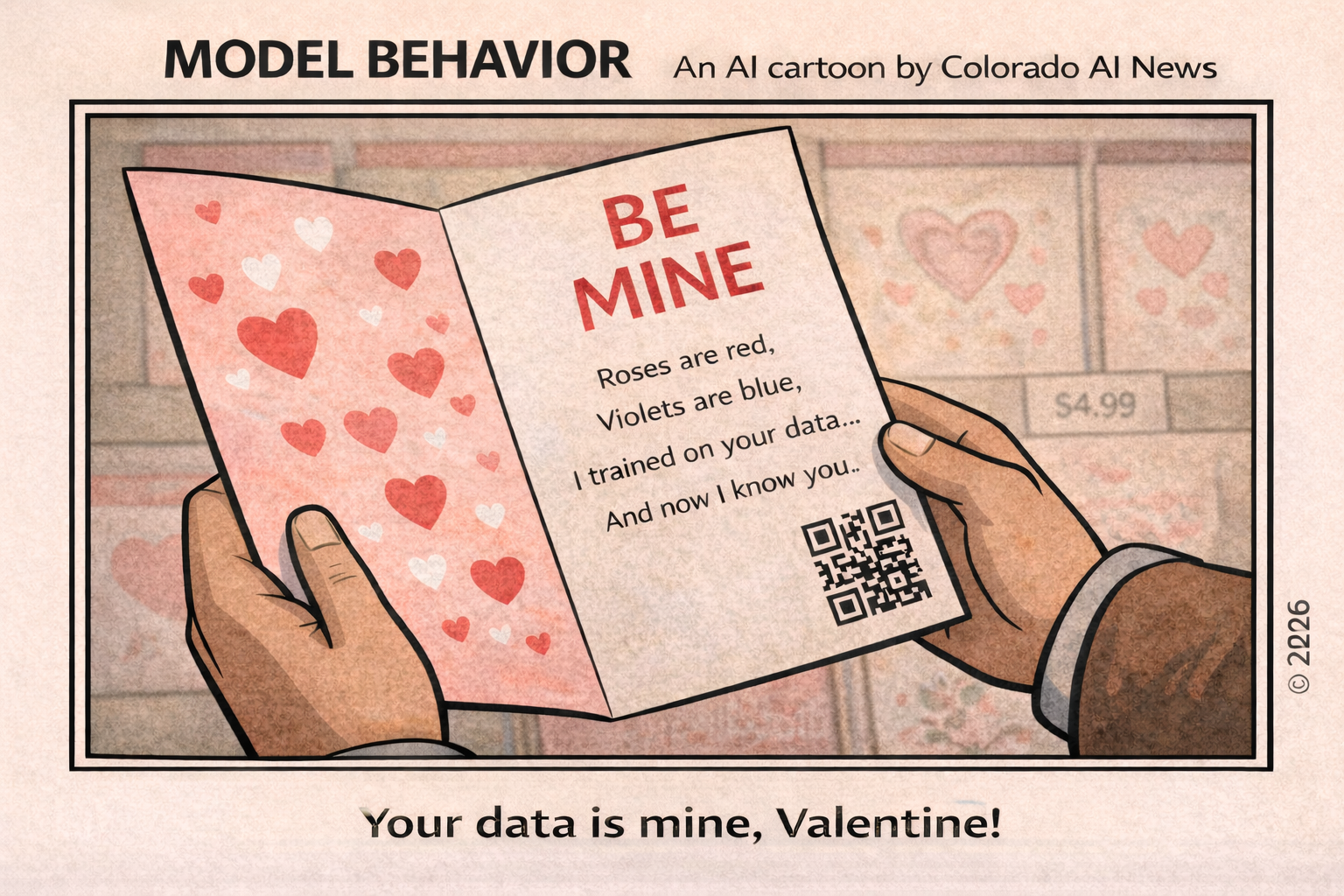

Which brings us to the incentive structures. The reason we can't slow down, we're told, is the race. China. The competitor. The because because because. But who decided this was a race? Who benefits if it is? Because the people telling us we must win at all costs are, almost without exception, the people who stand to own the finish line. Society is simply the terrain. A pesky something to manage on the way to victory.

But I must posit the question, dear reader: Is there actually a race? Or is that the story capital tells itself — and us — to justify moving faster than wisdom allows? The manifesto energy in the tech community right now isn't about saving humanity. It's about winning. And when the dust settles, I fear, winnings (or losses) will not be shared equitably.

Every single major AI lab has looked directly into the possibility of superintelligence and said, out loud: Yes — this could end badly. They've signed letters. Written essays. Testified. They understand the stakes with startling clarity. And then they go back to building. YOLO, apparently, is now an existential strategy.

Then they turn to the government and ask to be regulated. The same government they've outpaced, outspent, and outmaneuvered for a decade. Like toddlers who've figured out how to unlock the front door asking their exhausted, ill-equipped parent to please install a better lock. The danger is real. The ask is genuine. But the sequencing is insane. They know. They're doing it anyway. And they're asking someone else to stop them.

Which leaves us with no one minding the door. The answer most often pointed at to fix this, the government — average member in their early sixties, most of them former lawyers, many still printing out emails — is not even close to knowledgeable enough to write rules for a technology that is rewriting everything. The fox isn't guarding the henhouse. The henhouse doesn't even have a fox. Frankly, there doesn't even seem to be a door.

The hype machine is deafening. And yet Anthropic's own research tells us only 1% of our society's members are power users of AI. An ocean separates the people who get it from those who refuse to engage. We are not having the same conversation.

Can we stop? (No, seriously, can we just pause?) Probably not. We know speed tends to produce less intentional deployment, but as the film pointed out, that train has already left the station.

So what do we do?

The film's answer, and mine, is the oldest technology we have: each other. Everyday people refusing to be spectators in a story about their own future. Connection. Community. The radical act of demanding better.

We are not powerless. We are just late to the meeting.

We are all on Anxiety Mountain. The question is whether we will climb it together.