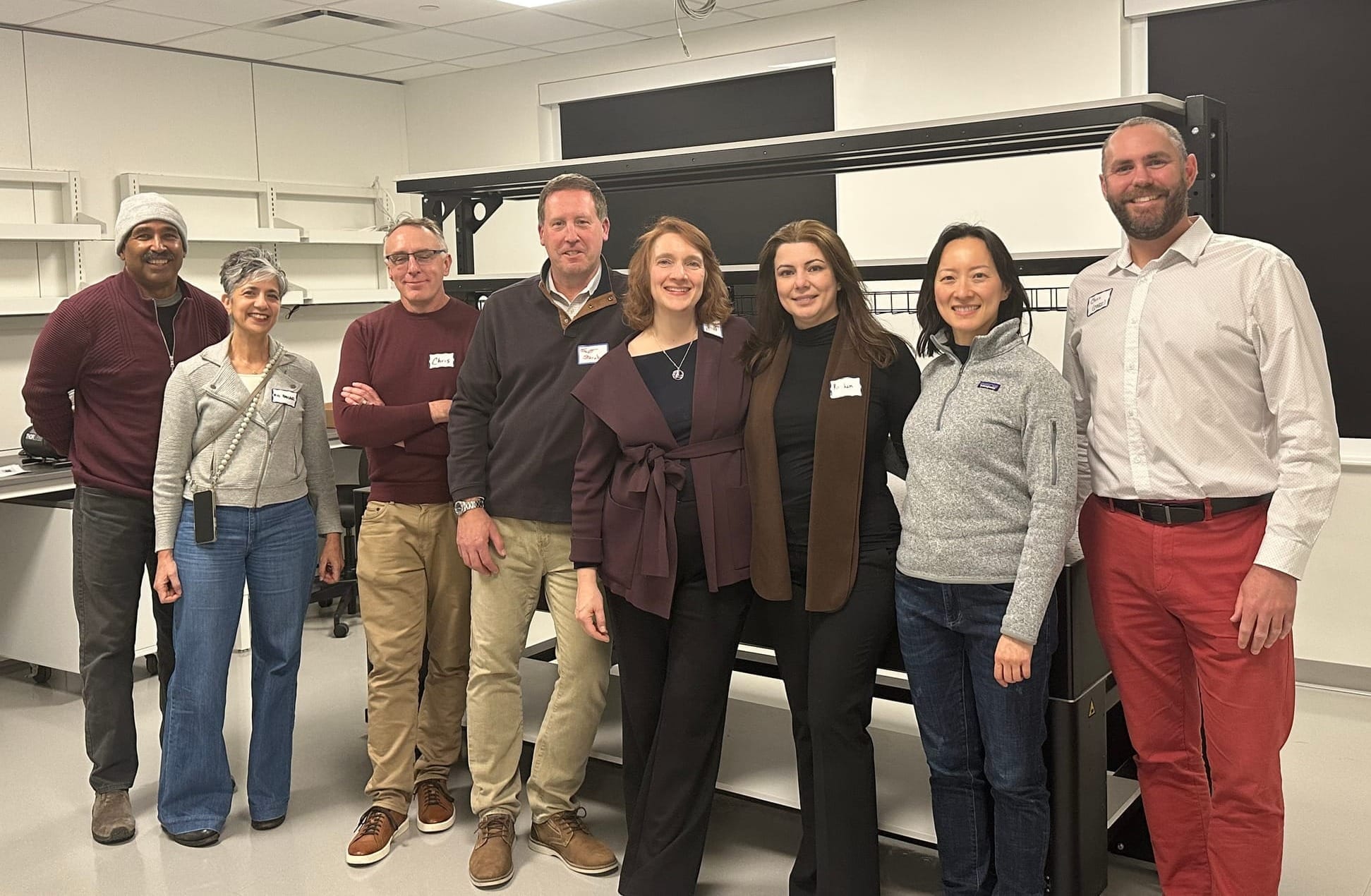

It felt like one of those “only in Boulder” nights: A brand-new AI club held its first meeting deep inside CU Boulder’s engineering complex, on a Friday evening, and the room filled up. Attendees were engaged, asked substantive questions, and kept talking long after the formal program ended.

The event marked the debut of the AI Collective Boulder, a new chapter of the AI Collective network, a global nonprofit that boasts of over 200,000 AI enthusiasts across more than 100 locations with the mission of helping to coordinate how society navigates the rapid acceleration of technological progress.

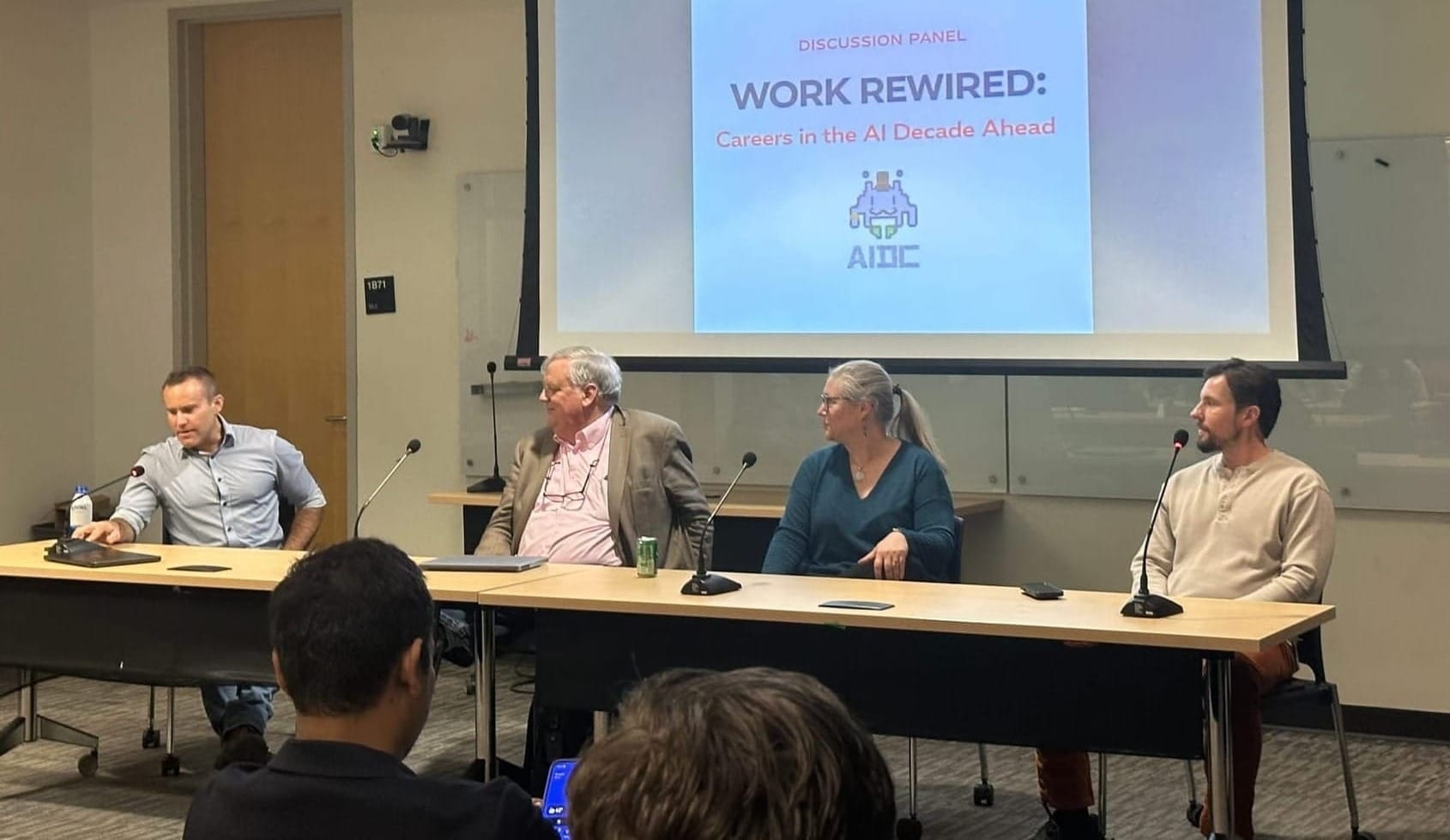

Boulder technologists Nithin Mohan and Anil Venkatesh launched the chapter last month with its inaugural program, titled “Work Rewired: Careers in the AI Decade Ahead.” The event brought students, professors, founders and working professionals into the same room at a moment when AI is already reshaping how people get hired, how work gets done, and what “career readiness” means in an ever-changing work environment.

Mohan said that he and Venkatesh are seeing AI reshape work firsthand in their day jobs, and they wanted a local forum that is as focused on people as it is on technology. They described the AI Collective as “building the human layer for the AI era” to expand access to AI knowledge, building trust, and creating a place for serious conversations about how society navigates fast-moving tools.

Venkatesh pointed to Boulder’s density of technical talent — and CU Boulder’s role inside that ecosystem — as a key reason to start the chapter here. The goal, he said, is to connect local conversations to a broader global network and make sure that Boulder is not only building AI, but is also convening the discussions around how it should be used.

Friday, April 3: "Learning How to Learn in the Age of AI"

Looking ahead, Mohan said the group plans a steady cadence of events in partnership with CU student groups, including the AI Development Club and the Leadership Development Program. Mohan suggested that formats of the events could range from panels and workshops to hackathons and open forums – intentionally designed to be useful both for people building AI systems and for those trying to understand how AI will reshape their industry.

For April 3, the AI Collective Boulder looks to do just that, as they have pulled together an experienced and diverse group to discuss "Learning How to Learn in the Age of AI." Scheduled speakers include the following:

- Cecil Sunder is a director of cloud & AI platforms at Microsoft, where he orchestrates AI and cloud solution strategies for the company's manufacturing clients in the U.S.

- Drew Ayling is a staff software engineer at Ford, where he empowers teams and organizations to understand value-based product delivery, while bridging communication gaps between development, quality, release, product, marketing, sales and support functions.

- Elliott Hedman is the principal UX and AI designer at Robots & Pencils. Over the past 12 years, he has led UX research at the executive level, partnering with Fortune 500 companies to build AI-powered UX strategies, scale research teams, and drive multi-million-dollar product impact. He has a Ph.D. from MIT in Psychophysiology, AI, and User Research.

- Andres Sepulveda Morales is the creative technologist and strategist at Red Mage Creative Technologies, where he blends technical implementation with strategy, workshops, and people-first methodology to demystify tech. He's also the treasurer of Rocky Mountain AI (parent of the Rocky Mountain AI Interest Group) and the founder of the RMAIIG subgroup, Fort Collins AI for Everyone.

- Karen Crouch is an instructional design and technology consultant at CU Boulder's Center for Teaching and Learning. She has a background in international and mulitcultural education, immersive technology, LMS management, and making education inclusive.

Looking back: a practical demo, then a bigger conversation

At the AI Collective's first meeting at the end of February, the evening opened with a demo of the new AI tool from entrepreneur Brent Cody, founder of FileForge. Cody framed a problem many organizations still struggle with: “dark data,” which can include scanned documents, handwritten notes, and images that standard enterprise search tools do not reliably index.

Cody argued that many people try to go straight from a chatbot to a polished deliverable, but better outcomes often come from using AI to build a repeatable workflow for extracting and organizing information.

From there, the conversation moved to the larger question that filled the room: What does it mean to build a career in an AI-saturated workplace?

Verify, verify, and verify again

Jim Dykes, a CU Boulder computer science professor, offered one of the cleanest definitions of the night: “I think of AI as the world’s biggest pattern matcher.”

Dykes’ recommendation to the attendees was to avoid fear, and to focus on self-discipline. His recurring theme was that AI can be useful at nearly every level of work, as long as people keep their hands on the wheel. He described an 89-year-old colleague who uses AI constantly, but who treats it as an assistant rather than an authority.

And Dykes harkened back to 1980s geopolitics with President Reagan's simple rule that applies just as much in the workplace of the 2020s: “Trust, but verify.”

Diane Sieber’s warnings about the pipeline and agency

If Dykes emphasized verification, Diane Sieber emphasized agency. Sieber – a professor in the Engineering School and director of the Generative Futures Lab for AI – sketched a future where AI becomes a powerful layer for personal learning and long-term knowledge management. In other words, AI could become a way to connect ideas across years, classes, and disciplines.

“Imagine in elementary school, you start uploading to your personal knowledge management tool everything that occurs to you,” she said, describing a lifelong repository a student could query as they move from class to class and year to year.

Sieber also offered one of the sharpest warnings of the night about labor markets and education. She argued that some employers are beginning to skip entry-level hiring in the belief that AI can handle junior work — and that the long-term consequence is a broken talent pipeline. “If you don’t hire entry level workers," she asked, "how are you going to have experts later?”

At that point, Sieber went further, saying the biggest risk is not only job displacement, it's a slow erosion of responsibility and self-direction as people let tools make decisions for them. She dismissed fixation on AGI as misplaced: “The risk that’s most often discussed is artificial general intelligence, which happens to be the biggest red herring ever,” she said. What worries her more is incremental drift, the process of humans “making thousands of tiny choices over time that whittle away at our sense of agency and our sense of responsibility.”

The entrepreneur & the developer: benefits, risks, and strategies for use

Cody’s panel remarks broadened the discussion beyond tools and workflows. He called AI “another productivity tool” with real benefits, but also pointed out the “risks and rewards” that occur at both the macro and micro level. On governance, Cody argued oversight ultimately needs to be public, not voluntary. “I think that it’s going to have to be governmental,” he said, adding that corporations are unlikely to regulate themselves.

Nicholas Browdues, a software developer at Platform Engineering Labs, described a more personal, everyday use case: using AI as a kind of “sparring partner” — a way to talk through ideas when it can be difficult to find the right person or the right material at the right level. “Before AI, it was hard to find people [to bounce] ideas off so well,” he said.

What's next for the new chapter

In their post-event Q&A, Mohan and Venkatesh said they want future meetings to keep the same balance: hands-on exploration of tools paired with clear-eyed discussion of how AI reshapes work, education, and decision-making.

They described the first event as proof of concept — a room full of students, professors, founders and practitioners who came for a panel on careers and stayed for a deeper conversation about what should not be outsourced: judgment, responsibility, and the habit of thinking for yourself.

Which leads to the second gathering of the AI Collective Boulder, taking place on Friday, April 3, from 4:30 to 6:30. For this event, the group will be meeting at CU Boulder's ATLAS Institute, which promises additional space for attendees. Registration and full details can be found here.

Note that the writer serves on the board of advisors of the Rocky Mountain AI Interest Group (RMAIIG) with Diane Sieber and is also a member of the partnership program with Brent Cody's company, FileForge.